The Crowd Lab had two posters/demos accepted for AAAI HCOMP 2019! Both of these papers involved substantial contributions from our summer REU interns, who will be attending the conference at Skamania Lodge, Washington, to present their work.

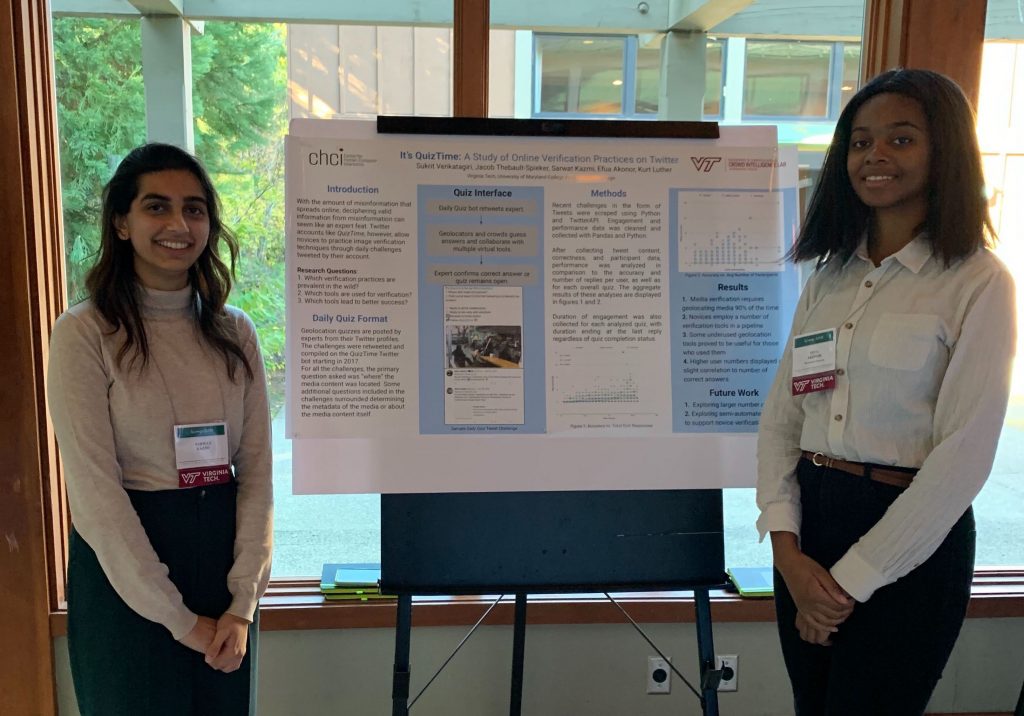

It’s QuizTime: A study of online verification practices on Twitter was led by Crowd Lab Ph.D. student Sukrit Venkatagiri, with co-authors Jacob Thebault-Spieker, Sarwat Kazmi, and Efua Akonor. Sarwat and Efua were summer REU interns in the Crowd Lab from the University of Maryland and Wellesley College, respectively. The abstract for the poster is:

Misinformation poses a threat to public health, safety, and democracy. Training novices to debunk visual misinformation with image verification techniques has shown promise, yet little is known about how novices do so in the wild, and what methods prove effective. Thus, we studied 225 verification challenges posted by experts on Twitter over one year with the aim of improving novices’ skills. We collected, annotated, and analyzed these challenges and over 3,100 replies by 304 unique participants. We find that novices employ multiple tools and approaches, and techniques like collaboration and reverse image search significantly improve performance.

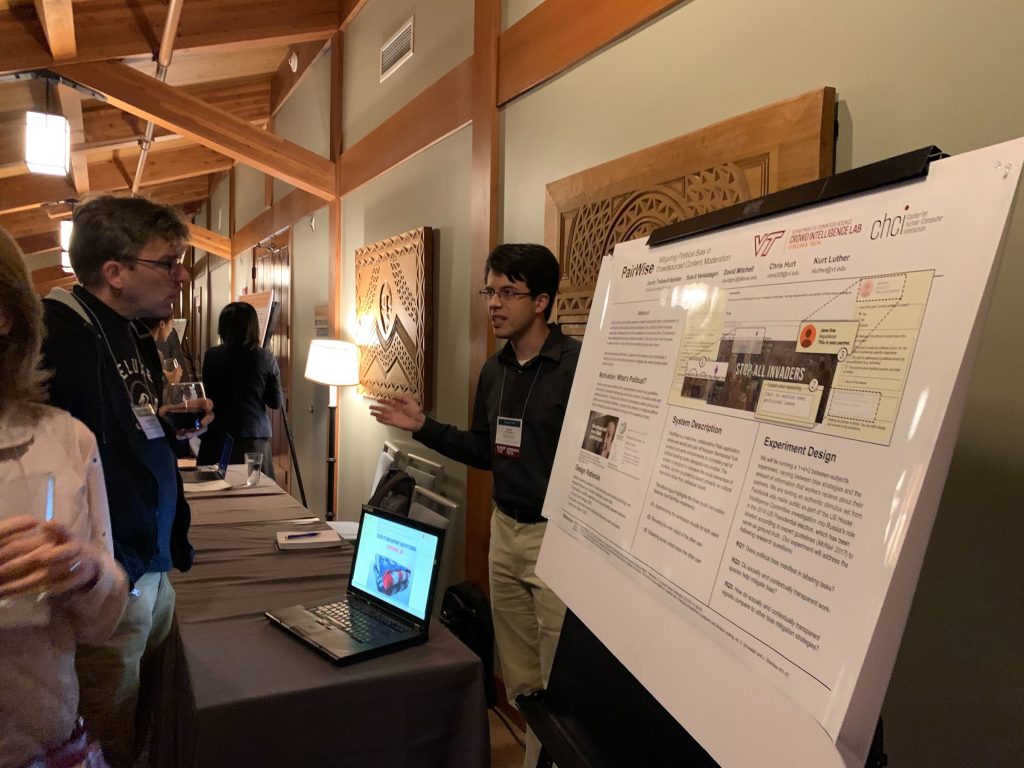

PairWise: Mitigating political bias in crowdsourced content moderation was led by Crowd Lab postdoc Jacob Thebault-Spieker, with co-authors Sukrit Venkatagiri, David Mitchell, and Chris Hurt. David was a summer REU intern from the University of Illinois, and Chris was a Virginia Tech undergraduate. The abstract for the demo is:

Crowdsourced labeling of political social media content is an area of increasing interest, due to the contextual nature of political content. However, there are substantial risks of human biases causing data to be labelled incorrectly, possibly advantaging certain political groups over others. Inspired by the social computing theory of social translucence and findings from social psychology, we built PairWise, a system designed to facilitate interpersonal accountability and help mitigate biases in political content labelling.